Last week OpenAI finally released their computer-using agent Operator, and it is now possible to ask an AI to buy groceries, book restaurants, search LinkedIn and collect relevant information for your next newsletter. It’s still a very early version, still in “preview”, and still based on GPT-4o, but it represents the first steps towards a future where everything you do on your computer can be done 100 times faster by an AI agent.

However the most impressive release the past week was not Operator, but DeepSeek R1 from the Chinese startup DeepSeek. Released as Open Source using an MIT license, it matches OpenAI o1 in many benchmarks but costs up to 50 times less. Some of the techniques used by DeepSeek was reverse-engineered from OpenAI o1, but many things are novel in its implementation and compared to current state-of-the-art models it was cheap to train as well.

Among other news this week is the decision to start using humanoid robots to build iPhones at Foxconn factories, a new “citations” API by Anthropic, a new API from Perplexity called Sonar, and a major new version of Hunyuan3D 2.0, the amazing text-to-textured-3D tool.

THIS WEEK’S NEWS:

- DeepSeek Challenges OpenAI with Open-Source R1 Model

- Google Launches Gemini 2.0 Flash Thinking with Million-Token Context Window

- Humanoid Robots to Build iPhones at Foxconn Factories

- Tech giants investing $500bn in ‘Stargate’ to build AI in the US

- Anthropic Launches Citations API

- OpenAI Launches Computer Using Agent “Operator”

- Codeium Windsurf Gets Major Upgrade

- Perplexity Launches Sonar AI Search API

- Perplexity Launches AI Assistant for Android

- Tencent Launches Hunyuan3D 2.0: Major Leap in AI-Powered 3D Generation

DeepSeek Challenges OpenAI with Open-Source R1 Model

The News:

- The Chinese AI startup DeepSeek released DeepSeek-R1 on January 20, a new open-source AI model that rivals OpenAI’s o1 in performance while being significantly more cost-effective.

- The model uses a massive 671 billion parameter architecture but activates only 37 billion parameters per forward pass through its Mixture of Experts (MoE) design, making it possible to run on cost efficient consumer hardware.

- DeepSeek-R1 achieves amazing benchmark scores, including 97.3% on MATH-500 and 91.8% on C-Eval, matching or exceeding OpenAI’s o1 performance.

- DeeåSeel also released six smaller versions of R1 optimized for laptops, with their 32B model outperforming OpenAI’s o1-mini.

- Unlike proprietary models, R1 is released under the MIT license, allowing developers to freely modify and commercialize the model. The model supports context lengths up to 128K tokens and features advanced capabilities like chain-of-thought reasoning and self-verification.

- Microsoft CEO Satya Nadella acknowledged the significance of this release, stating “we should take the development out of China very, very seriously”.

Input Token Pricing – o1 vs R1

- DeepSeek-R1: $0.55 per million tokens

- OpenAI o1: $15.00 per million tokens

- Cost reduction: 96.3% cheaper

Output Token Pricing – o1 vs R1

- DeepSeek-R1: $2.19 per million tokens

- OpenAI o1: $60.00 per million tokens

- Cost reduction: 96.3% cheaper

Cached Input Pricing – o1 vs R1

- DeepSeek-R1: $0.14 per million tokens

- OpenAI o1: $7.50 per million tokens

- Cost reduction: 98.1% cheaper

What you might have missed: “How Did They Make an OpenAI-Level Reasoning Model So Damn Efficient?” Here is a great discussion thread on Reddit going through how DeepSeek trained R1, including techniques such as Cold Start Fine-Tuning and Reasoning-Oriented Reinforcement Learning.

My take: It’s hard to overstate just how amazing this release is by DeepSeek. It’s not only remarkably cheaper to use than o1, it’s also open source and released under the MIT license. The total training cost for DeepSeek R1 was just $5.6 million, which is around the same price as GPT-3 back in 2020! The way they achieved this amazing feat is (1) using pure reinforcement learning instead of traditional supervised fine-tuning, (2) implementing a Mixture-of-Experts architecture that activates only 37 billion of 671 billion parameters per forward pass, and (3) employing a four-stage training process that optimized resource usage. The team even summarized their approach in the research paper DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning. This is one of the most important launches in a long time in the open source AI community, and 2025 has just started!

Read more:

- Arxiv: DeepSeek-R1: Incentivizing Reasoning Capability in LLMs via Reinforcement Learning

- How has DeepSeek improved the Transformer architecture? | Epoch AI

Google Launches Gemini 2.0 Flash Thinking with Million-Token Context Window

https://deepmind.google/technologies/gemini/flash-thinking

The News:

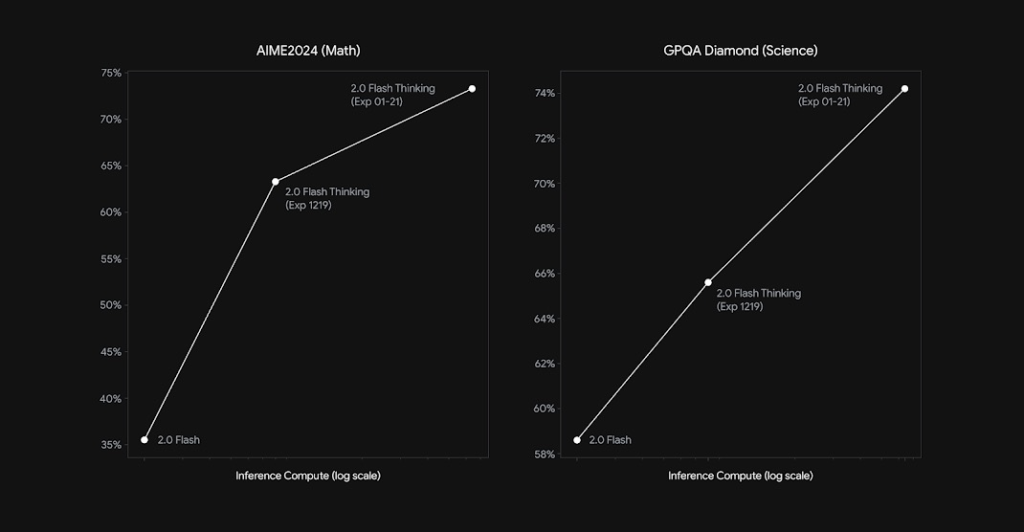

- Google has released Gemini 2.0 Flash Thinking Experimental (Exp-01-21), a new AI reasoning model that significantly improves upon its December predecessor with enhanced mathematical and scientific capabilities.

- The model now supports a massive 1-million token context window, up from the previous 32,000 tokens, enabling analysis of entire codebases or research papers in a single session.

- Benchmark performance shows amazing improvements, scoring 73.3% on AIME2024 (Math) and 74.2% on GPQA Diamond (Science) (see above).

- Native code execution support was also added, allowing the model to write and execute code during responses.

- The new version maintains multimodal capabilities, supporting both text and image inputs, while focusing on complex problem-solving tasks like programming, math, and physics.

My take: Context window is king when it comes to software programming, especially since it allows the model to know about the frameworks you use and how to use them properly. This is one of many reasons why Claude (200k+ tokens) is so much better than GPT-4o (128k tokens) when it comes to coding. But 1 million tokens? That’s a different league altogether. It will be interesting to see how it compares to Claude for coding later this year.

Humanoid Robots to Build iPhones at Foxconn Factories

The News:

- UBTech and Foxconn have signed a comprehensive long-term partnership to deploy humanoid robots in iPhone production.

- The Walker S1 robots have already completed two months of training at Foxconn’s Shenzhen facility.

- The Walker S1 robot stands 1.72 meters tall, weighs 76kg, and can carry loads up to 16.3 kilos while maintaining balance. It’s designed to handle complex tasks including sorting, assembly, and quality control.

- UBTech plans to launch the upgraded version called Walker S2 in Q2 2025, featuring improved precision for handling delicate components, advanced vision systems, and enhanced AI algorithms. UBTech aims to become the first company to achieve commercial mass production of humanoid robots through this partnership.

My take: With Figure’s humanoids in BMW factories, Apptronik’s in Mercedes, and now UBTech in Foxconn’s iPhone assembly lines, humanoid factory robots are quickly moving from fan fiction to reality. I believe the transition from humanoid workers to robots for most routine work will go much faster than anyone could have ever imagined.

Tech giants investing $500bn in ‘Stargate’ to build AI in the US

https://openai.com/index/announcing-the-stargate-project

The News:

- OpenAI, SoftBank, Oracle, and MGX have joined forces to create The Stargate Project, a $500 billion investment initiative to build AI infrastructure across the United States over the next four years, with an immediate $100 billion commitment.

- The project aims to create over 100,000 American jobs and will begin with the construction of 10 data centers in Texas, with plans for nationwide expansion.

- Key technology partners include Arm, Microsoft, NVIDIA, and Oracle, with SoftBank handling financial responsibilities and OpenAI managing operations. Masayoshi Son will serve as chairman.

- Sam Altman, OpenAI’s CEO, called it “the most important project of this era” and emphasized its potential to revolutionize healthcare, stating “As this technology evolves, we will see diseases cured at unprecedented rates”.

- The project was announced at the White House by President Trump, who emphasized keeping AI advancements in America to compete with countries like China.

What you might have missed: In September 2024, CCICC chairman Chen Liang announced that China could be investing over $1.4 trillion over 6 years into AI.

My take: How much does it cost to keep AI developing at an exponential rate? My guess is that it is fairly close to $500 billion over 4 years. Within a few months we will have the next-generation models rolling out, which will be significantly better than what we have today. There is a good chance the models of 2025 will already be good enough for all coding and all writing, and it will only improve from there. I guess the main question now is how this will affect us in Europe. Last year France announced an investment of $500 million into AI over a five year period. It sounded like a lot of money back then, but now the US invests 1 000 times more. But there is still hope, models like DeepSeek R1 above showed us that thanks to some clever design it’s possible to build models that are significantly cheaper to both train and use than the top models by OpenAI. The only way for us in the EU to keep up is to be smarter and faster.

Anthropic Launches Citations API

https://www.anthropic.com/news/introducing-citations-api

The News:

- Anthropic introduced Citations, a new API feature that enables Claude AI to provide detailed references to source documents it uses to generate responses. The feature is available on both Anthropic’s API and Google Cloud’s Vertex AI.

- Internal evaluations show that Citations improves recall accuracy by up to 15% compared to custom implementations.

- Citations processes documents by breaking them into chunks and automatically references specific sentences and passages when generating responses, eliminating the need for complex prompt engineering.

- Early adopters report significant improvements: Thomson Reuters integrated Citations into their CoCounsel AI platform for legal services, while Endex reported a 20% increase in reference accuracy for financial research.

- The feature works with Claude 3.5 Sonnet and Claude 3.5 Haiku models, with pricing based on document length – approximately $0.30 for a 100-page document with Sonnet and $0.08 with Haiku.

My take: The most challenging aspect of rolling out generative AI in professional applications like legal research, financial analysis, and customer support is the ability to verify and trust the AI-generated information. The Citations API is a fantastic first approach to solve this issue, and while it’s a bit pricey ($0.30 per 100-page document) the time savings most probably more than makes up for it. I am right now integrating generative AI within several organizations and Citations will be a very welcome addition.

OpenAI Launches Computer Using Agent “Operator”

https://openai.com/index/computer-using-agent

The News:

- OpenAI released Operator, an AI agent that can autonomously perform web-based tasks like booking travel, making restaurant reservations, and shopping. The tool represents OpenAI’s first venture into autonomous AI agents that can interact with computer interfaces like humans do.

- Powered by a new Computer-Using Agent (CUA) model that combines GPT-4o’s vision capabilities with advanced reasoning, Operator can interact with websites by clicking buttons, navigating menus, and filling out forms.

- The agent operates in a dedicated browser window where users can monitor its actions and intervene when needed. It requires user confirmation for sensitive tasks like submitting orders or entering payment information.

- Operator is initially available only to US-based ChatGPT Pro subscribers ($200/month), with plans to expand to Plus, Team, and Enterprise tiers in the coming months. Launch in Europe will be delayed due to “regulatory hurdles” according to Sam Altman.

My take: For many tasks, Operator seems to perform quite well. The new CUA model is still based on GPT-4o, and I believe both performance and quality will improve immensely once all next-gen models begin rolling out later this year. Browser-based agents seems like a good start, but I can’t stop wondering where this will leave desktop productivity apps going forward if we somehow “get stuck” using AI agents exclusively in browser environments.

Codeium Windsurf Gets Major Upgrade

https://codeium.com/blog/windsurf-wave-2

The News:

- Windsurf is Codeium’s AI-first integrated development environment (IDE), offering developers AI-assisted coding capabilities with both free and paid tiers.

- Windsurf “Wave 2” introduces web search functionality through Cascade (the AI panel), allowing developers to search online resources using @web commands or specific URLs for API documentation and changelogs.

- The IDE now automatically learns and adapts to developers’ coding patterns, building upon Wave 1’s explicit memory feature to provide more personalized coding suggestions.

- Improved terminal shell integration enables better handling of virtual environments (venv) and more autonomous AI operation.

- Wave 2 also includes VPN support for corporate networks and introduces enterprise SaaS and hybrid deployment options.

My take: I still prefer Cursor as my main environment, but Windsurf is evolving rapidly. I would love to hear from you if you have used Wave 2 extensively what your thoughts are and how it compares to Cursor.

Perplexity Launches Sonar AI Search API

https://twitter.com/perplexity_ai/status/1881779310840984043

The News:

- Last week Perplexity launched an API service called Sonar, allowing enterprises and developers to build the startup’s generative AI search tools into their own applications.

- Two service tiers available: Basic Sonar ($5 / 1,000 searches + $1 / 750,000 words) for simple queries, and Sonar Pro ($5 / 1,000 searches + $3 / 750,000 input words + $15 / 750,000 output words) for complex tasks.

- Notable early adopters include Zoom, which integrated Sonar into its AI Companion 2.0 for real-time information access during video calls, and Doximity for clinical answer retrieval.

My take: If you have been trying to build your own “Perplexity” and integrating it into your workflows, you most probably realized why Perplexity is worth over $9 billion. It is actually quite difficult to write a generative AI search service like Perplexity that works as well as Perplexity does. Well, now anyone can use Perplexity through their API, and I will be integrating this into many workflows in the coming weeks.

Perplexity Launches AI Assistant for Android

https://twitter.com/perplexity_ai/status/1882466239123255686

The News:

- Perplexity released a new AI assistant for Android devices that can perform multi-step tasks across different apps. The assistant marks Perplexity’s evolution from a search engine to an integrated mobile assistant capable of executing actions through other applications.

- The assistant can handle everyday tasks like booking dinner reservations, finding songs, calling rides, drafting emails, and setting reminders – all while maintaining context between different actions.

- The app features multimodal capabilities, allowing users to interact with their environment through the phone’s camera to identify objects or get information about what’s on screen. Supported app integrations include Spotify, YouTube, and Uber, with more apps planned for future updates.

My take: It’s an interesting approach by Perplexity, and with this AI Assistant they are competing directly with Google Gemini, on Google’s home turf. Both assistants offer screen context understanding on Android, and both offer features like setting reminders, composing messages, making reservations, booking rides and playing music. I guess if you already have a Perplexity subscription then you might be interested in Perplexity for Android, otherwise I think most Android users would be better off with Google Gemini.

Tencent Launches Hunyuan3D 2.0: Major Leap in AI-Powered 3D Generation

https://github.com/tencent/Hunyuan3D-2

The News:

- Tencent released Hunyuan3D 2.0, an advanced AI system that transforms text descriptions or single images into detailed 3D models within seconds, featuring a revolutionary two-stage generation pipeline for creating high-quality 3D assets.

- The new version introduces decoupled generation of geometry and texture, resulting in more refined geometric structures and richer texture colors compared to version 1.0.

- While version 1.0 used a multi-view diffusion model with 4-second generation time, version 2.0 employs a more sophisticated architecture with Hunyuan3D-DiT for shape generation and Hunyuan3D-Paint for texture synthesis.

- Performance metrics show Hunyuan3D 2.0 outperforms all existing open-source and closed-source models in geometry details, condition alignment, and texture quality.

My take: I was impressed with Hunyuan3D 1.0 when I wrote about it in Tech Insights 2024 week 46, but now Hunyuan3D 2.0 is already out and improves almost every aspect of it. If you are working with 3D assets you owe it to yourself to check this one out. It’s free to use, but if you have over 1 million active users you must contact Tencent for a commercial license.